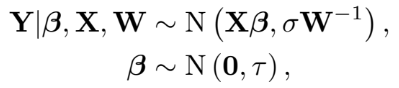

In the Regularized Adjusted Plus-Minus (RAPM) model, one of the perceived challenges is understanding the error associated with the resulting posterior RAPM value a player receives. In a previous post, we noted that RAPM is a Bayesian model in which we assume that “player contribution” can be estimated through weighted offensive ratings conditioned on the players participating in a given stint. Through this assumption, the weighted offensive rating is assumed to be a Gaussian distribution centered at the linear combinations of players with some variance, sigma. Furthermore, the player contribution has a “prior” distribution suggesting that player weights are viewed as mean zero Gaussian processes with some underlying variance, tau. Mathematically,

where Y is the vector of offensive ratings, W is a diagonal matrix of possessions played by the stint of interest, X is the player-stint matrix, and (sigma, tau) are the likelihood and prior variance, respectively. Putting this all together, and leveraging conjugate distributions, we find that the posterior distribution is indeed a Gaussian distribution:

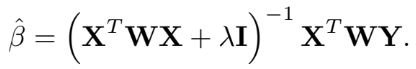

From this seemingly tedious calculation, we find that the RAPM estimate for each player is given by

This is exactly the RAPM estimate that you see given on many of those other fancy websites. To put this into the offensive-defensive RAPM context, let’s understand again what this equation is doing. First, we have the offensive rating, Y, which is effectively points scored per 100 possessions (not differential as in some other forms of RAPM). Multiplying by W turns the quantity WY into a “100 times points scored.” Since the design matrix, X, is stints by players, the value XtWY is merely identifying the stints for which offensive players contributed points and defensive players discounted points and adding such stints together. This quantity is effectively “100* plus-minus” for each player.

Now, that inverse quantity… The quantity XtWX is counting the number of possessions each “tandem” has played in. The diagonal element will be “10* the number of possessions played,” as there are 10 players on the court. The off-diagonal elements are some multiple of possessions played; where the some multiple indicates players who play multiple stints together. In a previous post, we saw that just using this quantity in the inverse led to a mathematically unreasonable solution: reducibility… which led to infinite variances. Here, we rectify this by introducing that prior weight. This is a mechanism that biases the final result but allows us to obtain a reasonable variance on the final estimate. This means the inverse quantity is “inverted” possessions played between teammates. The inverse identifies some extent of the correlation between players playing together (or against each other).

Therefore, the final estimate is in “effective points per 100 possessions given some prior variance tau” for each player. In code form, using some pre-determined tau (I selected 5000 because that’s from literature) we have

Note that I use two Ratings vectors. One is with weights pre-multiplied for ease of computation later. The other is the true observed ratings used for error analysis.

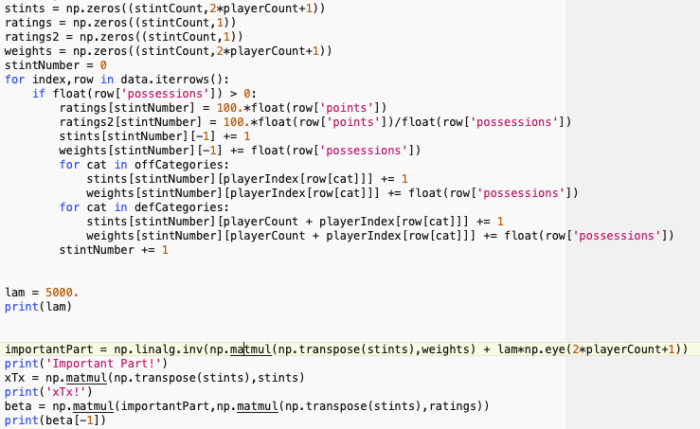

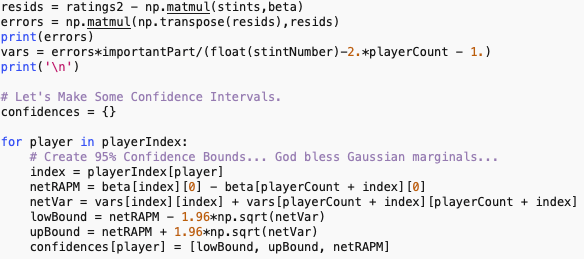

The value beta[-1] is the intercept term, effectively meaning “baseline offensive rating.” Here that value was approximately 98. Courtesy of Ryan Davis, we obtain stint data and run this code to get the following output:

It’s not quite what we find on his website; but they are close. In fact, on his tutorial, Davis has Nurkic as 13th overall and Ingles as 14th overall. Above we have them at 12th and 13th. However, LeBron James is 19th in the above list while he drops to 36 on Davis’ list. Also note that Davis’ RAPM estimates are smaller, which indicates his tau is even smaller than ours (leading to a larger lambda). Regardless, we have effectively the same results.

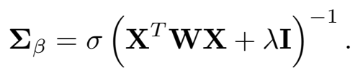

What we also get from the tedious computation is the variance term associated with each RAPM estimate:

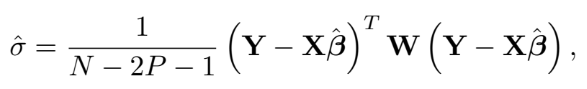

Since this is a regression model, we are able to estimate sigma by computing the residuals of the model:

where N is the number of stints observed, P is the total number of players observed, and the term N-2P-1 identifies the number of degrees of freedom within the regression model. Note that there is a subtlety here: we assumed that every player who has played a single possession on offense has also played at least a single possession on defense. This is not a guarantee; therefore we may change 2P to be P_o + P_d, where P_o is the number of players who have played at least one offensive possession and P_d is the number of players who have played at least one defensive possession.

At this point, much of the focus of RAPM is placed on determining the prior variance, tau. Typically, folks will eschew prior variance estimation by instead applying cross-validation to identify a “best” lambda term. In the outside literature, this value has ranged between 500 and 5000. In conjunction with estimation of sigma from the regression setting, we can extract an estimate of the prior variance through “hat{sigma} / hat{lambda}”.

What’s even nicer is that since we have a Gaussian posterior distribution, we know the highest posterior density (HPD) intervals determining confidence is equivalent to the standard confidence interval for Gaussian random variables. In this case, we can follow the simple “estimate +/- critical value x standard error” formulation. For a single player, we can look at the marginal distribution, which is also itself a Gaussian distribution. For a lineup of interest, we look at the joint of the individual player marginals.

Application to Yearly Data

Let’s apply the above techniques to the 2018-19 NBA Season. Courtesy of Ryan Davis, we obtain a stint file for which a row of data corresponds to indicators for the five players on offense, indicators for the five players on defense, the number of possessions played, and the number of points scored. From this data set, we can extract the values of Y, W, and X accordingly. For simplicity, let’s just assume that tau = 5000. The above table shows the “Top 25 players.” In terms of coding the error, we simply run:

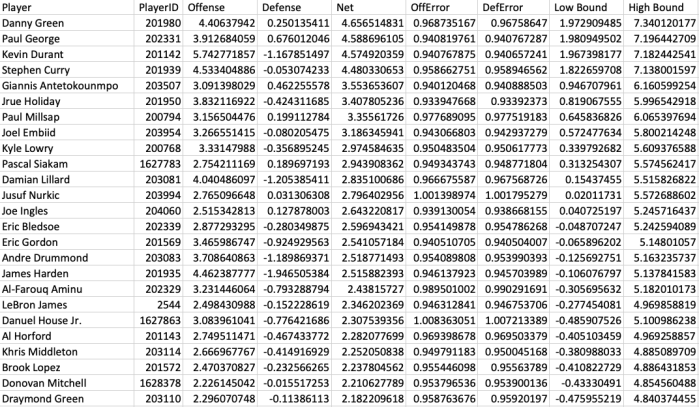

Replicated with variance terms and marginal confidence bounds we now have

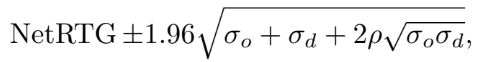

Here we see that Danny Green is atop the leaderboard. While this would suggest that Danny Green is the biggest contributor to net ratings, we know this is really not the case. What’s more important is that we should take a look at his variance term. Looking at the marginal of Danny Green’s Offensive and Defensive ratings, we can compute the confidence interval for the net rating. In this case, the 95% confidence interval for Danny Green’s net rating is given by

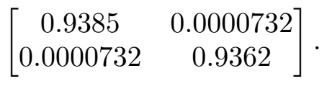

where sigma_o and sigma_d are Green’s offensive and defensive RAPM variances, respectively. The value rho is the correlation between Danny Green’s offensive and defensive numbers. For this exercise, Green’s offensive/defensive covariance matrix is given by

Using this variance-covariance matrix, Green’s Net Rating of 4.66 is really viewed as some value in between

Comparing this to the rest of the league, we see that 39 other players fit within this confidence bound, indicating that despite being the “league leader,” Danny Green really identifies within the Top 39 players in the league. This is a “best case scenario” for identifiability. In fact, if we grab the 200th player in the league, Kyle Korver, we find that his confidence interval is [ -1.21, 4.16]. This indicates that Korver is equivalent to 460 other players in the league; ranging from Giannis Antetokounmpo (6th) to Damyean Dotson (464th).

Now let’s extend this out to a starting unit. For sake of argument, let’s look at a “starting” lineup for the Brooklyn Nets during the 2018-19 NBA season. Using starts as a proxy, suppose the starting lineup is D’Angelo Russell, Joe Harris, Jarrett Allen, Rodions Kurucs, and Caris LeVert. Using the single-season RAPM estimates above, we obtain offense-defense ratings for Russell, Harris, Kurucs, LeVert, and Allen (respectively):

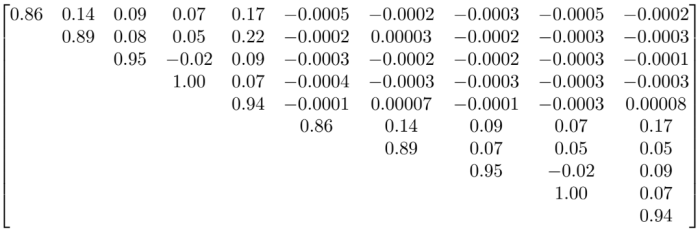

with associated variance-covariance matrix:

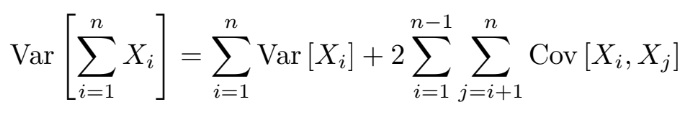

The expected Net Rating of this lineup is then 0.5953. Constructing the univariate variance term, we rely on the variance of a sum of correlated variables derivation:

Reading these right from the variance-covariance matrix above, we obtain a stint net rating variance of 12.7695. This indicates that the confidence interval for the expected net rating of the Brooklyn Nets’ starting lineup is [-6.4086, 7.5992], which is quite a considerable range over the span of 100 possessions.

It should be noted that we treat this type of analysis with the utmost of care. Recall that we are only using roughly 71,000 stints. For 530 NBA players, this means we only have at best 1.5xe-17 PERCENT of all possible 10-man lineups. So deviating outside of ay observed lineups is quite prohibitive. Therefore, building a dream lineup around Joel Embiid, Anthony Davis, Andre Drummond, Jusuf Nurkic, and Justise Winslow would have phenomenal RAPM considerations, it is not part of the sampling frame and therefore non-representable (read that as meaningful) in results.

In intel analyst speak: “We cannot determine what’s going on in Zimbabwe if all we do is look at Cincinnati and Rhode Island.”

Why Do We Even Care?

Over the series we have created about RAPM, we’ve identified several of the benefits gained by regularization while noting the various pitfalls if we simply embrace the numbers. While we know the central limit theorem actually fails and ratings are not necessarily Gaussian at each conditional stint level (part 3), we can perform the regularization to impose a PCA-like solution (part 2) to understanding ratings better than in basic APM (part 1). However, we see that the variances are still relatively inflated and that we do not get a great understanding of player impact; see Kyle Korver above as the standard test case. Instead, we obtain a filtered identification of a player. And instead of relying on this muddle number that lacks a considerable amount of context; we can instead leverage this technique to impose further filtration on player qualities. This is the case for more recent advanced analytics such as RPM from Jerry Englemann and PIPM from Jacob Goldstein.

Straying from the technical advancements, we can also leverage RAPM as a “smoothed but biased” estimator for discussing the impact of a player on offense and defense. The reason we suggest “instead of technical advancements” is due to the fact that defining defensive metrics is really hard. A great synopsis of using RAPM to discuss this point, as opposed to creating potentially misleading defensive statistics is given by Seth Partnow at the Athletic.

However, we can put to rest the commentary that “understanding errors” in RAPM is difficult and instead embrace what these values are really telling us. (So please stop e-mailing me about this topic!)

Pingback: Weekly Sports Analytics News Roundup - October 8th, 2019 - StatSheetStuffer

I’m a little confused about the formula for \hat{sigma}. You write that (N-2P-1)\hat{sigma} = R^T W R, so \hat{sigma} is an N by N matrix. On the other hand, in the code to calculate the variance, (N-2P-1)\hat{sigma} seems to be given by R^T R (what you call ‘errors’). The equation for \Sigma_{\beta} also doesn’t seem to work if \sigma is NxN, so I’m guessing the formula in the code is the correct one. But perhaps I’m missing something.

Thank you for doing this. Maybe I’ll try my hand at computing confidence intervals for related stats like PIPM or BPM-unless their creators already have?

LikeLike

You’re missing the transpose. The matrix is not NxN, but rather (2P+1)x(2P+1).

LikeLike

Whoops! R^T W R definitely isn’t NxN, you’re right, so the dimensions work out either way. So should we be multipliyng by R^T R or by R^T W R?

LikeLike

In both the theory and the code it’s R^T*W*R. I think it looks a little off to you because I make a transformation that allows the code to run fast. Processing the weight matrix by itself dramatically increases (thanks to inverses on other data frames) the computation time of the algorithm.

LikeLike

Thanks for your help. I’m trying to replicate your results, so I was also wondering exactly which possession data you fed into your program. Was it from Ryan Davis at https://github.com/rd11490/NBA_Tutorials/blob/master/rapm/data/rapm_possessions.csv? You’re using around 71000 stints, but there are around 240000 rows in that file with possessions != 0. They’re almost certainly single possessions though, and not stints. If we naively combine all of the nonzero possessions with the same lineups together, we get around 63000, so it makes sense that the actual number of stints is a bit more than that.

LikeLike

For the post, I used whatever Ryan’s latest dump was (at that time), since that is publicly available. His parser has similar errors as my own parser (some stints had like 70 possessions) due to wonkiness in the pbp. Also, we tend to count free throws differently. For instance, offensive possessions can score negative points if they create a technical foul and the defensive makes the free throw. I believe if the offense loses you points, they should be charged. Some (read that as MANY) folks will “throw out” technical fouls. The ones I throw out are ones due to coaches and players on the bench.

At that time, from the data Ryan sent me, we had almost identical values for RAPM across the board. After this post, PositiveResidual also obtained very close values (he didn’t know the tuning parameters we both used).

LikeLike

Yeah, my values are (unsurprisingly) about the same as well, but for some reason all the variances I can check against yours are about twice as high. I assume this is just the result of a coding error on my part, but I’m stumped about what it could be. That’s why I was wondering whether it could instead stem from different inputs. Thanks again!

LikeLike

Interesting. I know Positive Residual’s method of using MCMC to estimate variances came up with values within 5% from the theoretical variance estimates; which is common for MCMC methods.

I wouldn’t know why something would be twice as high over than maybe the correlation was accidentally doubled/squared? But that would be approximately a 1.5x increase.

LikeLike

Finally got back to this. I appreciate all you responses!

I tried again using data from a different source (https://github.com/903124/NBA_oneway_stint), and I got the exact same RAPM values for the top 25. The problem with the variances being about twice as high persists though, and I think I’ve found the reason why. To calculate R^T W R, I was using R = ratings2- stints*beta, and WR = ratings-weights*beta. This should be correct because ratings is WY and weights is WX (from what I can tell, this is the transformation you mentioned). However, if I instead use R^T R (which indeed is about half the size), then I match your variances. Is there some other mistake I’m making here? It seems like my method of calculating R^T W R should work.

LikeLike

Pingback: Approximating Curves I: Mechanical Process | Squared Statistics: Understanding Basketball Analytics